Data-driven instruction (DDI), whereby schools and teachers use student data in a formative way to improve instructional practices and student learning, is receiving increasing attention and support as a promising approach to school reform. But many teachers feel unprepared to use student data to inform their instruction. Moreover, there is little evidence of whether it improves student achievement.

Data-driven instruction (DDI), whereby schools and teachers use student data in a formative way to improve instructional practices and student learning, is receiving increasing attention and support as a promising approach to school reform. But many teachers feel unprepared to use student data to inform their instruction. Moreover, there is little evidence of whether it improves student achievement.

In this study, Mathematica is conducting an experimental impact evaluation of the effects of providing additional support for DDI on teacher practices and student achievement. The study team recruited 102 elementary schools from 12 districts around the country into the study. Half of the schools were randomly assigned to receive funding for a data coach of their choosing as well as intensive professional development for coaches and school leaders on helping teachers use student data to inform their instruction. The remaining schools received no additional funding for a data coach or professional development. The study examined the implementation of the support for DDI in the schools that received the intervention, and measured its impacts on teacher and student outcomes after a one-and-a-half year implementation period. The DDI intervention kicked off in the study’s treatment schools in December 2014 and outcomes were measured through spring 2016 surveys of school principals and teachers and student-level administrative data covering the 2015-2016 school year.

All of the study’s treatment schools hired half-time data coaches, and most of the coaches and school leaders attended the study’s professional development sessions and met regularly with teachers. Correspondingly, treatment school teachers reported receiving significantly more data-related professional development and support from coaches and school leaders than did control school teachers. However, the support for DDI provided in the intervention did not lead to significant improvements in key teacher and student outcomes. In particular:

- The study’s DDI coaching and professional development did not increase teachers’ data use or change their instructional practices. Teachers in treatment and control schools were similarly likely to report engaging in data use and instructional practices associated with DDI.

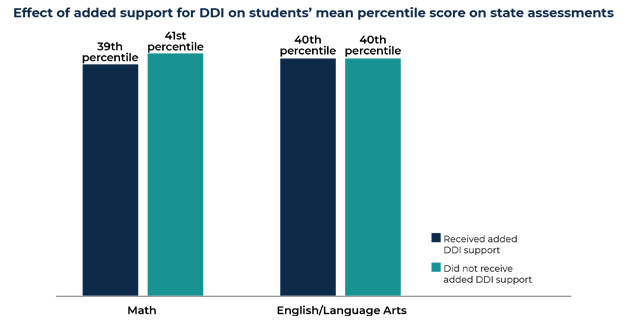

- The study’s support for DDI did not improve student achievement. On average, treatment and control school students had similar achievement in math and English/language arts, scoring at about the 40th percentile on their state assessments in each subject.

These findings are consistent with other recent research showing that support for DDI has not improved student achievement. Findings from this study’s implementation analysis suggest possible avenues for improving how DDI is supported and implemented. For example, all the data coaches in this study were experienced educators, but it was rare for them to have worked previously as a data coach. Perhaps inexperienced data coaches need more professional development than was provided in this study. The emphasis of the professional development may also need to be refined. Most DDI support programs emphasize interpreting student data in the professional development, but it is possible that greater emphasis is needed on helping teachers identify, select, and put in place appropriate instructional strategies.